For decades, the technological singularity—the hypothetical point in time when artificial intelligence advances so rapidly that it creates an irreversible change in human civilization—was the domain of science fiction writers and radical futurists. In 2026, it has become a metric on a spreadsheet.

As of today, February 14, 2026, the conversation in Silicon Valley and global research hubs has shifted from speculative philosophy to rigorous mathematical modeling. The question is no longer "if" superintelligence will arrive, but exactly "when." A compelling new analysis by Cam Pedersen argues that if we track the acceleration of recent data correctly, we can pinpoint the arrival of the singularity to a specific day of the week: a Tuesday.

The Hyperbolic Curve: Why Tuesday?

The headline-grabbing prediction comes from Cam Pedersen, whose analysis suggests that human observers are ill-equipped to intuitively grasp the speed of current AI progress. Humans are evolved to understand linear progression, or perhaps steady exponential growth. However, Pedersen argues that AI development is following a hyperbolic curve. Unlike exponential growth, which is fast but steady, hyperbolic growth approaches a vertical asymptote—mathematically reaching infinite progress in a finite amount of time.

Pedersen attempts to fit five distinct metrics of AI progress to these curves. His conclusion is that the lines converge in the very near future, specifically on a Tuesday. While the specific day serves as a provocative anchor for an abstract concept, the underlying math suggests that we are rapidly running out of "time" on the X-axis before the curve goes vertical.[1]

From MMLU to ARC-AGI: The Benchmark Crisis

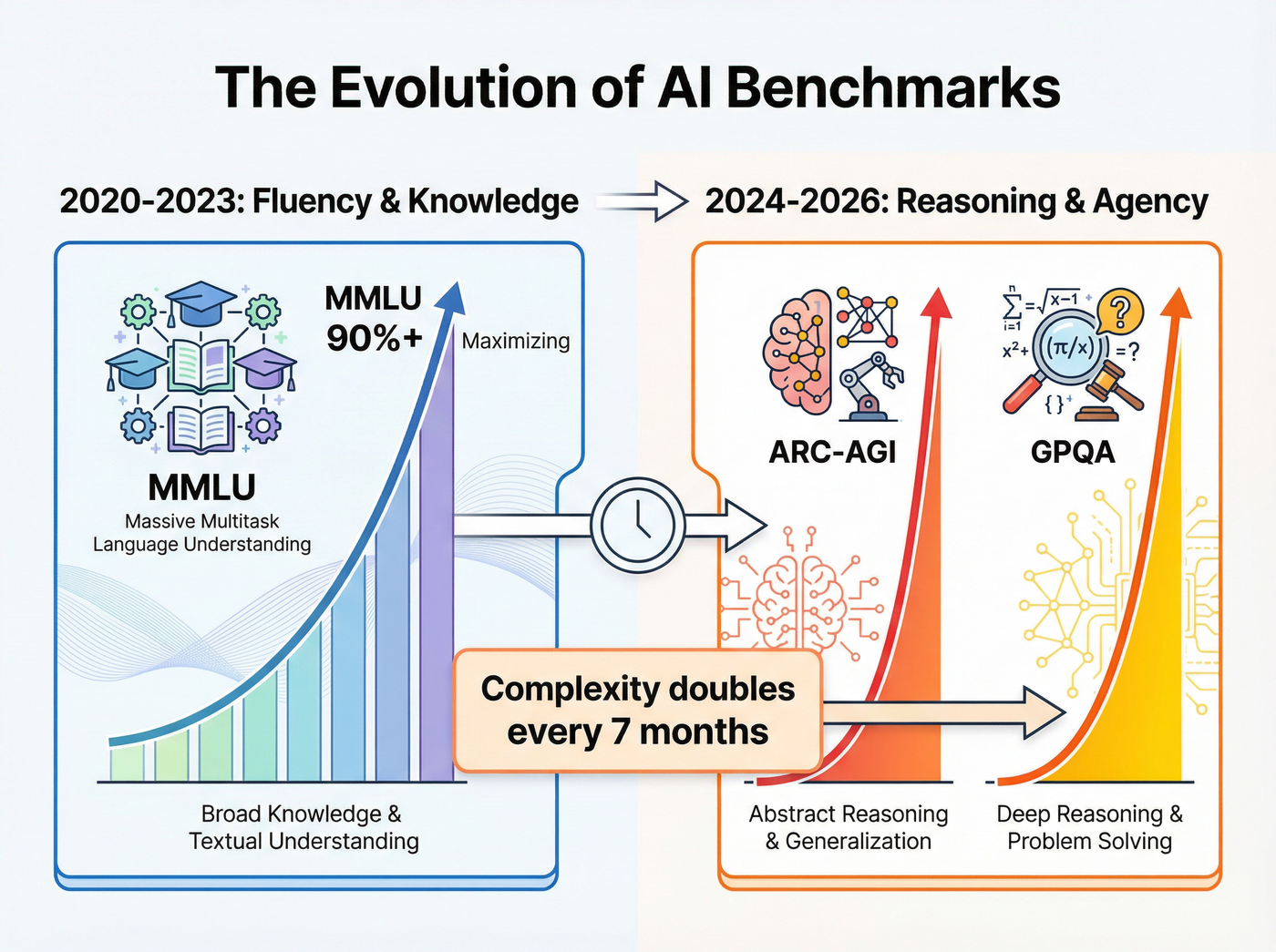

Part of the reason the timeline feels so compressed is that our measuring sticks keep breaking. For years, the Massive Multitask Language Understanding (MMLU) benchmark was the gold standard, testing models on 57 subjects ranging from STEM to the humanities. Today, top-tier models like GPT-4 and Claude 3 have effectively "maxed out" this test, rendering it useless for distinguishing between frontier models.[6]

To get a clearer picture of the singularity, researchers have pivoted to far more difficult evaluations:

- GPQA (Graduate-Level Google-Proof Questions): Tests the ability to answer questions that require PhD-level expertise and cannot be easily solved by search engines.

- ARC-AGI: A benchmark designed to measure fluid intelligence and reasoning rather than memorized knowledge.

The speed at which models are conquering these new frontiers is startling. In less than two years, performance on the incredibly difficult ARC-AGI benchmark jumped from a trivial 5% to over 75% with the release of reasoning-heavy models like OpenAI's o3. This jump signifies a phase shift: AI is moving from mimicking fluency to genuine problem solving.[5]

The Seven-Month Doubling Cycle

We are all familiar with Moore’s Law, which observed that the number of transistors on a microchip doubles about every two years. In the current AI paradigm, that pace is lethargic. Recent reports indicate that the complexity of tasks AI agents can autonomously handle is doubling roughly every seven months.

This acceleration is driven by the shift toward "System 2 thinking" in AI architectures. Earlier models primarily predicted the next likely word in a sequence (System 1). Newer iterations, such as o1 and o3, pause to deliberate, verify their own logic, and correct errors before responding. This recursive capability allows them to tackle tasks that require sustained coherent thought over long periods.

If this trend holds, a project that requires a human level of cognitive effort for one hour today might be solvable by AI in seconds by the end of the year. The "time horizon"—the duration an AI can work independently without human intervention—is expanding from minutes to days, and eventually to months.[5]

The Counter-Argument: Compute Constraints

Is the singularity inevitable by next Tuesday? Not everyone agrees. The primary friction against this hyperbolic curve is the physical reality of hardware and energy. A recent research note titled "Forecasting AI Time Horizon Under Compute Slowdowns" introduces a critical variable: compute scarcity.

While algorithms are improving, the physical infrastructure (GPUs, data centers, and power plants) takes years to build. If we hit a wall in power availability or chip manufacturing, the "software-only singularity" might be delayed. The researchers at METR argue that if compute scaling slows down, the attainment of long-horizon autonomous agents could be pushed back significantly—potentially by seven years or more.[2][3]

The Verdict

Whether the singularity arrives on a random Tuesday in 2026 or is delayed by physical constraints until the 2030s, the trajectory is clear. We are living through a period of technological convergence where the "safe" predictions of yesterday effectively become the history of today.

As Pedersen notes, asking "if" is the wrong question. The data suggests we should be preparing for a world where the capabilities of our tools outpace our ability to benchmark them.

Listen to the episode

Predicting the Singularity: The Tuesday Deadline