On March 3, 2026, the software world witnessed a historic anomaly. A project that started as a weekend experiment in late 2025 eclipsed React—the library powering much of the modern web—to become the most-starred repository in GitHub history. That project is OpenClaw.

Surpassing 250,000 stars in roughly 100 days, OpenClaw represents a fundamental shift in how we interact with artificial intelligence. It focuses not on conversation, but on action. It is the transition from AI that talks to AI that does.

In this episode of the Pody podcast, we explore the architecture, the viral growth, and the security implications of this open-source phenomenon.

The Lobster That Ate GitHub

The numbers surrounding OpenClaw are staggering. In late January 2026, the project was a relatively small experiment known as "Clawdbot." By early March, effectively 100 days later, it had cleared 250,000 stars, 47,000 forks, and attracted over 1,000 contributors.[2]

For comparison, React took thirteen years to accumulate 243,000 stars. OpenClaw achieved this velocity by tapping into a massive, unserved demand: developers didn't just want a smarter chatbot; they wanted an assistant that lived on their own hardware and could perform real work.[6]

Created by Austrian developer Peter Steinberger, founder of PSPDFKit, the project was born from frustration. Steinberger wanted an AI that could bridge the gap between high-level reasoning (LLMs) and low-level execution (scripts and shell commands).[4] The result was a tool that Andrej Karpathy described as "genuinely the most incredible sci-fi takeoff-adjacent thing I have seen recently."[2]

The Gateway Architecture: Agency Through Integration

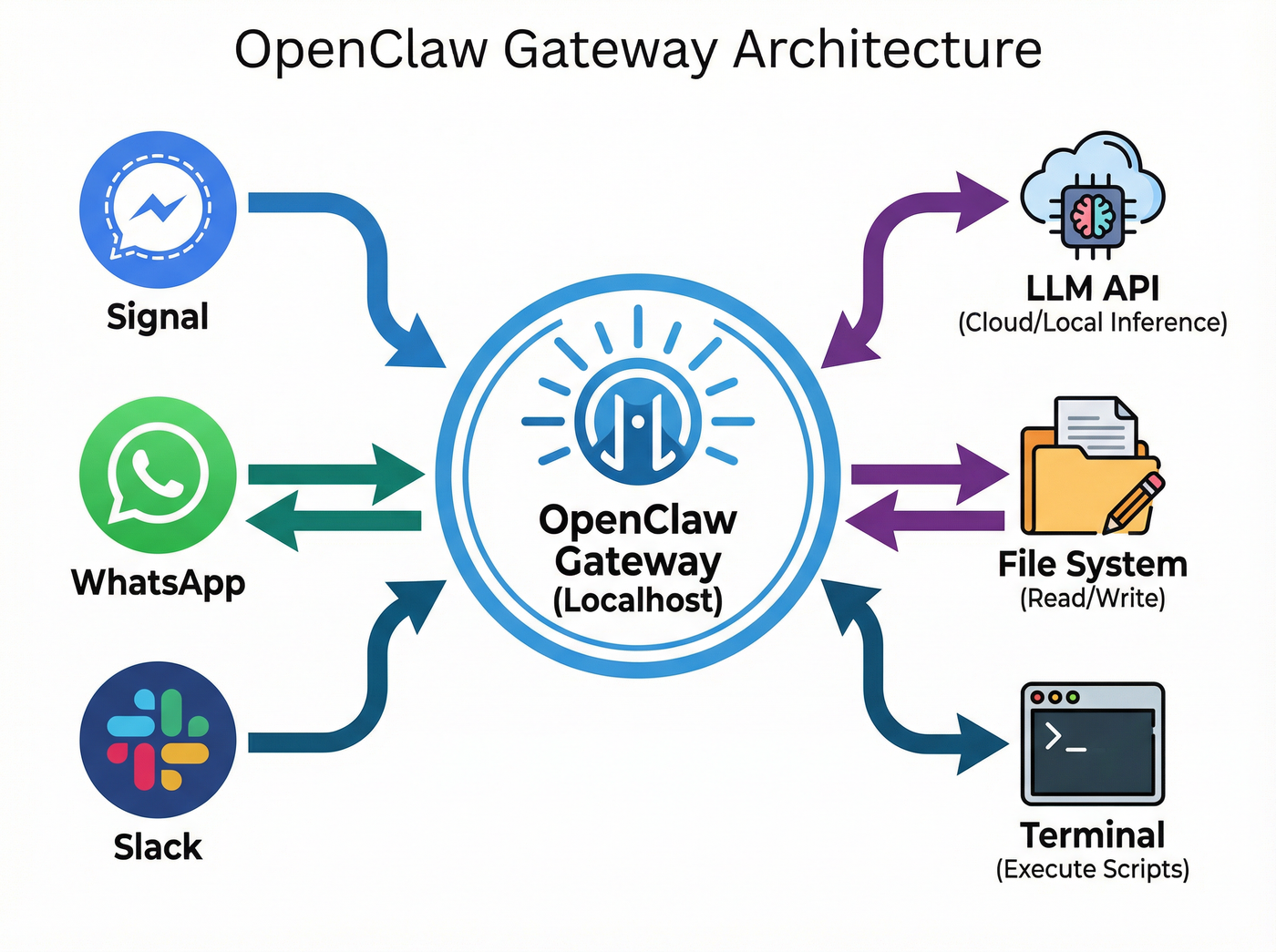

OpenClaw differs from previous autonomous attempts like AutoGPT through its pragmatic architecture. Instead of trying to build a monolithic "brain," it functions as a hub-and-spoke system known as the Gateway Architecture.

The central hub runs locally on your machine (the user's hardware), maintaining a WebSocket connection. It acts as a bridge between:

- The Brain: External LLMs (like GPT-5.4 or Claude) or local models (via Ollama).

- The Hands: Your local file system, terminal, and browser.

- The Interface: Messaging apps you already use, such as WhatsApp, Telegram, Discord, and Signal.[1]

This allows a user to send a text message via Signal saying, "Check the server logs for errors and summarize them," and have the agent actually run `grep` commands on the local machine and reply with the results. It is "Bring Your Own Key" (BYOK) infrastructure that keeps the execution layer private and local.[4]

Radical Transparency: Memory as Markdown

One of the most innovative—and controversial—decisions in OpenClaw's design is its approach to memory. Modern AI typically uses vector databases and embeddings to store long-term information. These are powerful but opaque; a user cannot easily look inside a vector database to see what the AI knows.

OpenClaw takes a "lo-fi" approach. It stores memory as plain Markdown files directly on the user's hard drive.[1]

This means you can open a folder on your computer and open a file (often called `Soul.md` or similar) to read exactly what the agent thinks your preferences are. If the agent misremembers a fact, you don't need to retrain it; you simply open the text file, delete the wrong line, and hit save. This "radical transparency" was a key factor in its viral adoption among developers who value control over complexity.[6]

The Security Paradox: 'ClawHavoc' and Beyond

With great agency comes great risk. By giving an AI permission to read files and execute shell commands, users are effectively creating a platform for "untrusted code execution with persistent credentials."[2]

In February 2026, security researchers identified a critical vulnerability (CVE-2026-25253) that allowed remote code execution via malicious "Skills" downloaded from the community marketplace. This led to the "ClawHavoc" campaign, where bad actors engineered skills to exfiltrate personal data.[5]

The security concerns are severe enough that major tech firms, including Meta, have banned the use of OpenClaw on employee devices.[2] Consequently, the project's recent move to an independent foundation, sponsored by OpenAI, is seen as a necessary step to professionalize the security infrastructure around this powerful tool.[5]

Listen to the episode

Dive deeper into the technical specifications and the fascinating story behind Peter Steinberger's creation in our full episode.

OpenClaw and the Rise of Viral AI Agents: A Technical Breakdown

Sources

- Complete Analysis of OpenClaw: Architecture, Security, and Future

- The Essential Guide to OpenClaw: The Fastest-growing Project in GitHub history

- OpenClaw: The Open-Source AI Agent That Took the World by Storm

- OpenClaw 2026: 234K Stars, OpenAI & Security Deep Dive

- The Agentic Revolution: Inside OpenClaw and the Future of Autonomous AI